As artificial intelligence adoption expands across industries, executive leaders are placing greater emphasis on AI governance and responsible deployment. While AI offers significant benefits in automation, personalization, and predictive insight, it also introduces ethical, regulatory, and operational risks that require structured oversight.

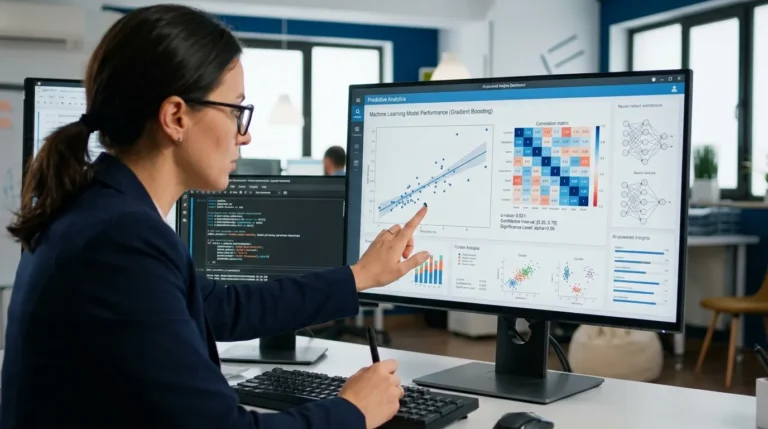

Organizations increasingly recognize that AI systems influence critical decisions — from credit approvals and hiring recommendations to healthcare diagnostics and supply chain optimization. Ensuring fairness, transparency, and accountability has therefore become a strategic priority.

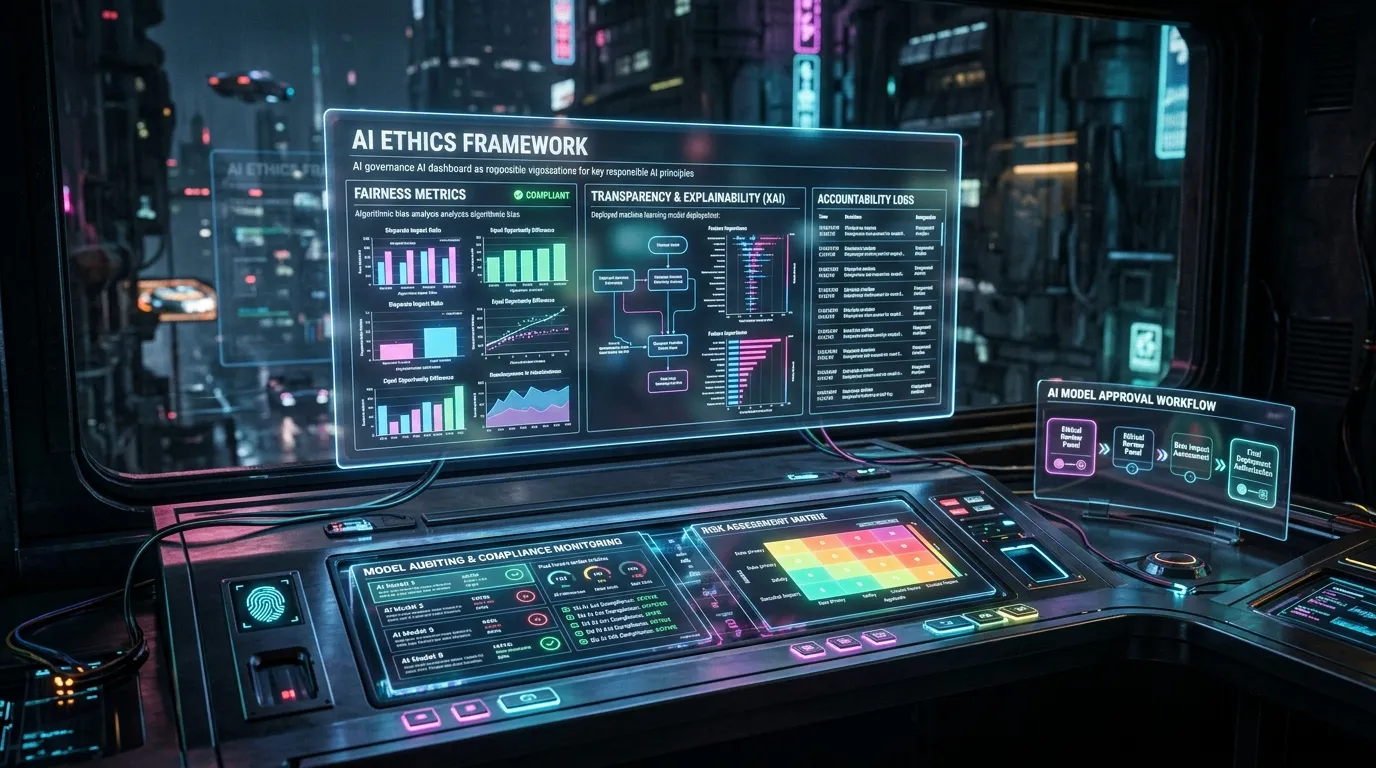

AI governance refers to the policies, frameworks, and controls that guide how AI systems are developed, deployed, and monitored. It encompasses ethical considerations, regulatory compliance, risk management, and performance oversight.

Technology providers such as IBM and Microsoft have introduced responsible AI toolkits that support bias detection, model explainability, and lifecycle management.

Core pillars of AI governance typically include:

- Bias detection and mitigation

- Model transparency and explainability

- Data quality validation

- Continuous performance monitoring

- Human oversight and accountability

Bias remains one of the most discussed challenges. AI systems trained on unbalanced datasets may produce discriminatory outcomes. Governance frameworks require rigorous testing to identify and correct such issues before deployment.

Explainability is equally critical. Stakeholders increasingly demand clarity on how AI models arrive at decisions, particularly in regulated sectors. Black-box algorithms without interpretability may face resistance from regulators and customers alike.

Cloud infrastructure plays a role in supporting governance. Platforms such as Google Cloud provide monitoring tools that track model performance and data drift across deployment environments.

Data governance intersects closely with AI governance. Models are only as reliable as the data they consume. Clear documentation of data sources, lineage tracking, and access controls strengthen trust in AI outputs.

Regulatory frameworks addressing AI transparency and accountability are evolving globally. Enterprises must remain adaptable as compliance expectations shift.

Key challenges in AI governance include:

- Balancing innovation speed with oversight

- Integrating governance across multiple AI tools

- Maintaining documentation for audit readiness

- Aligning cross-functional teams

Governance requires collaboration between data scientists, legal teams, risk managers, and executive leadership.

Ethical considerations extend beyond regulatory compliance. Responsible AI deployment strengthens brand reputation and stakeholder confidence.

Organizations are establishing AI ethics committees and review boards to evaluate high-impact use cases before implementation.

Continuous monitoring is essential. AI models may degrade over time as real-world conditions change, a phenomenon known as model drift. Automated alerts help detect declining performance.

Security considerations also intersect with AI governance. Protecting models from adversarial manipulation and safeguarding sensitive training data remain critical.

Industry analysts emphasize that governance maturity differentiates sustainable AI leaders from experimental adopters.

Transparency reports and documented oversight processes demonstrate commitment to responsible innovation.

As AI systems increasingly influence enterprise operations, structured governance frameworks provide safeguards against unintended consequences.

AI governance is not intended to slow innovation but to ensure it progresses responsibly.

Organizations that embed accountability, transparency, and continuous evaluation into AI strategy build long-term trust and resilience.

Responsible AI is rapidly becoming a strategic imperative — shaping how enterprises balance innovation with ethical responsibility.