As artificial intelligence initiatives expand across industries, enterprises are As artificial intelligence initiatives expand across industries, enterprises are rethinking infrastructure strategies to support increasingly complex computational demands. What began as isolated machine learning experiments has evolved into large-scale AI deployment across customer service, cybersecurity, supply chain optimization, and product development. Supporting these workloads requires infrastructure that is not only scalable but purpose-built for high-performance computing.

Traditional enterprise infrastructure was designed primarily for transactional workloads and database management. AI workloads, however, demand a different architecture. Training advanced machine learning models requires massive parallel processing capabilities, high-speed storage systems, and low-latency data pipelines. This shift has elevated AI-ready infrastructure from a technical upgrade to a board-level priority.

Cloud providers have responded by expanding specialized AI infrastructure services. Platforms such as Amazon Web Services and Microsoft Azure offer GPU-accelerated instances and managed AI environments designed to reduce the complexity of model training and deployment. These services enable enterprises to scale compute resources dynamically based on workload intensity.

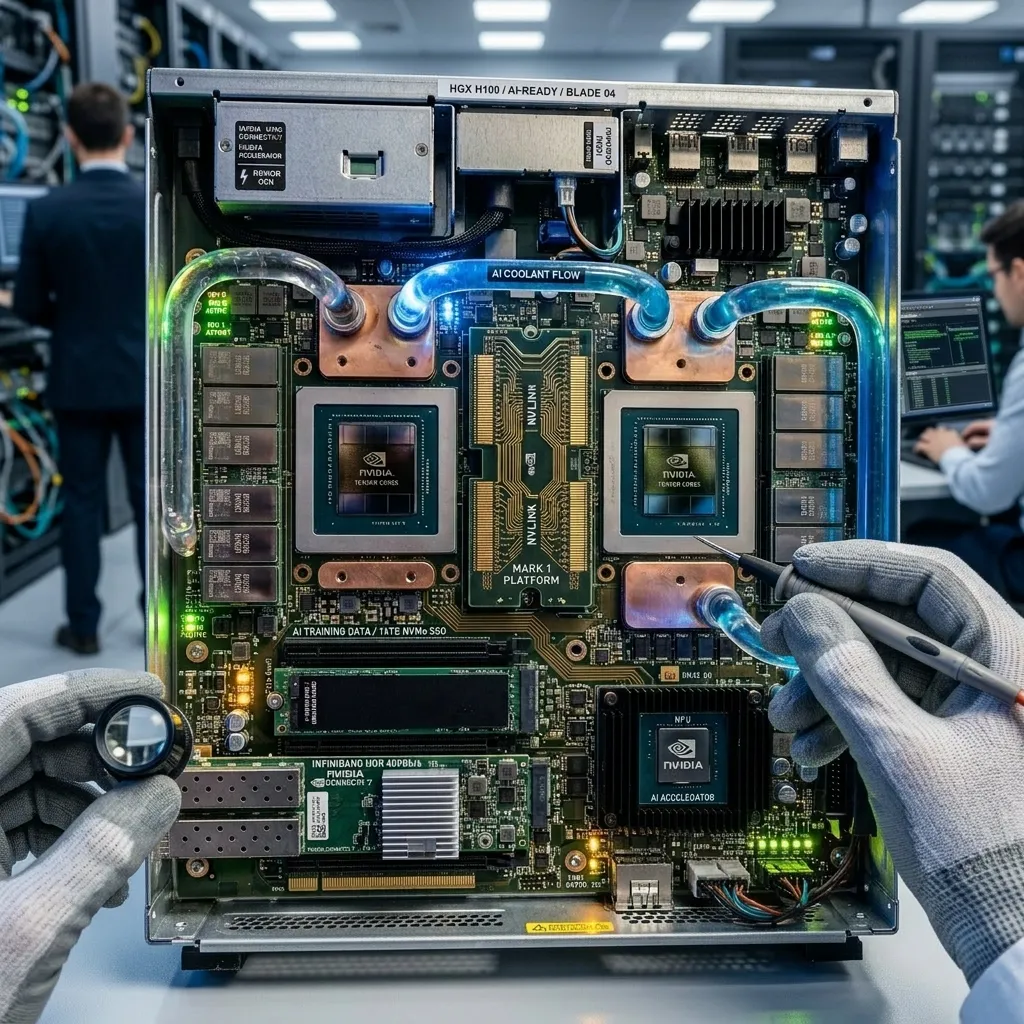

Hardware innovation is also playing a central role. Companies like NVIDIA continue advancing high-performance GPUs and AI-optimized processors that significantly accelerate training cycles. Dedicated AI chips are increasingly integrated into cloud and on-premise infrastructure, improving efficiency for both inference and large-scale model training.

AI-ready infrastructure involves more than raw computing power. Data architecture is equally critical. Machine learning systems require access to clean, well-structured datasets. Enterprises are investing in modern data lakes and high-speed storage systems capable of handling both structured and unstructured data at scale.

Key components of AI-ready infrastructure typically include:

- GPU-accelerated computing clusters

- High-bandwidth networking

- Scalable object storage systems

- Real-time data ingestion pipelines

- Advanced cybersecurity controls

Security considerations are particularly important in AI environments. Training models often involves sensitive customer or operational data. Strong encryption, identity management, and compliance frameworks must be embedded directly into infrastructure design.

Enterprises are also adopting hybrid AI strategies. While many training workloads run in public cloud environments, some organizations maintain sensitive inference workloads within private infrastructure for compliance reasons. Hybrid deployment models ensure flexibility while maintaining regulatory alignment.

Operational complexity remains a challenge. Managing distributed AI infrastructure across cloud regions requires advanced monitoring tools. Observability platforms provide visibility into performance metrics such as latency, throughput, and resource utilization.

Cost management is another strategic consideration. AI workloads can generate significant computing expenses if not optimized. Enterprises are implementing workload scheduling systems and cost-monitoring tools to maintain efficiency.

Industry analysts note that AI-ready infrastructure increasingly defines digital competitiveness. Organizations capable of training and deploying models rapidly can innovate faster, personalize services more effectively, and respond to market changes proactively.

Workforce skills are also evolving alongside infrastructure requirements. Engineers and IT teams must develop expertise in GPU orchestration, distributed computing frameworks, and cloud-native AI tools.

AI infrastructure is no longer confined to research labs. It is becoming a foundational enterprise capability supporting predictive analytics, automation, and intelligent customer engagement.

As AI adoption accelerates, infrastructure decisions will shape long-term innovation capacity. Enterprises that build scalable, secure, and high-performance AI environments position themselves to leverage advanced analytics as a core strategic asset.

AI-ready infrastructure is not simply an enhancement to existing systems — it represents a new generation of enterprise computing designed for intelligent operations.