Autonomous artificial intelligence systems are evolving rapidly, moving beyond narrow task automation toward more adaptive, self-directed capabilities. While Artificial General Intelligence (AGI) — systems capable of human-level reasoning across domains — remains a long-term research objective, progress in autonomous AI architectures is accelerating across enterprise and research environments.

Traditional AI models operate within predefined constraints, performing specific tasks such as image recognition, language translation, or predictive analytics. Autonomous AI systems, however, integrate reasoning, planning, memory, and self-improvement mechanisms to execute multi-step objectives with limited human intervention.

Organizations such as OpenAI and DeepMind are advancing research into large-scale models capable of generalized reasoning and complex problem-solving.

Key areas of development in autonomous AI include:

- Multi-agent coordination systems

- Long-context memory models

- Reinforcement learning optimization

- Self-supervised learning frameworks

- AI-driven code and research automation

Multi-agent AI systems are being designed to collaborate, delegate tasks, and adapt dynamically to changing environments. These architectures simulate distributed intelligence, enabling coordinated problem-solving across large computational systems.

Reinforcement learning continues to play a central role in developing autonomous behavior. AI agents learn optimal strategies through iterative interaction with simulated environments.

Cloud computing infrastructure providers such as Microsoft are integrating advanced AI capabilities into enterprise ecosystems, supporting experimentation and scalable deployment.

Enterprise applications of autonomous AI are expanding into:

- Automated software development

- Complex data analysis

- Financial modeling

- Supply chain optimization

- Cybersecurity threat response

However, autonomy introduces governance challenges.

Key concerns include:

- AI decision transparency

- Safety alignment mechanisms

- Model hallucination risk

- Ethical boundaries in self-directed systems

Researchers are actively working on alignment techniques to ensure AI systems operate within defined ethical and operational constraints.

AI safety research is emerging as a parallel priority alongside capability development.

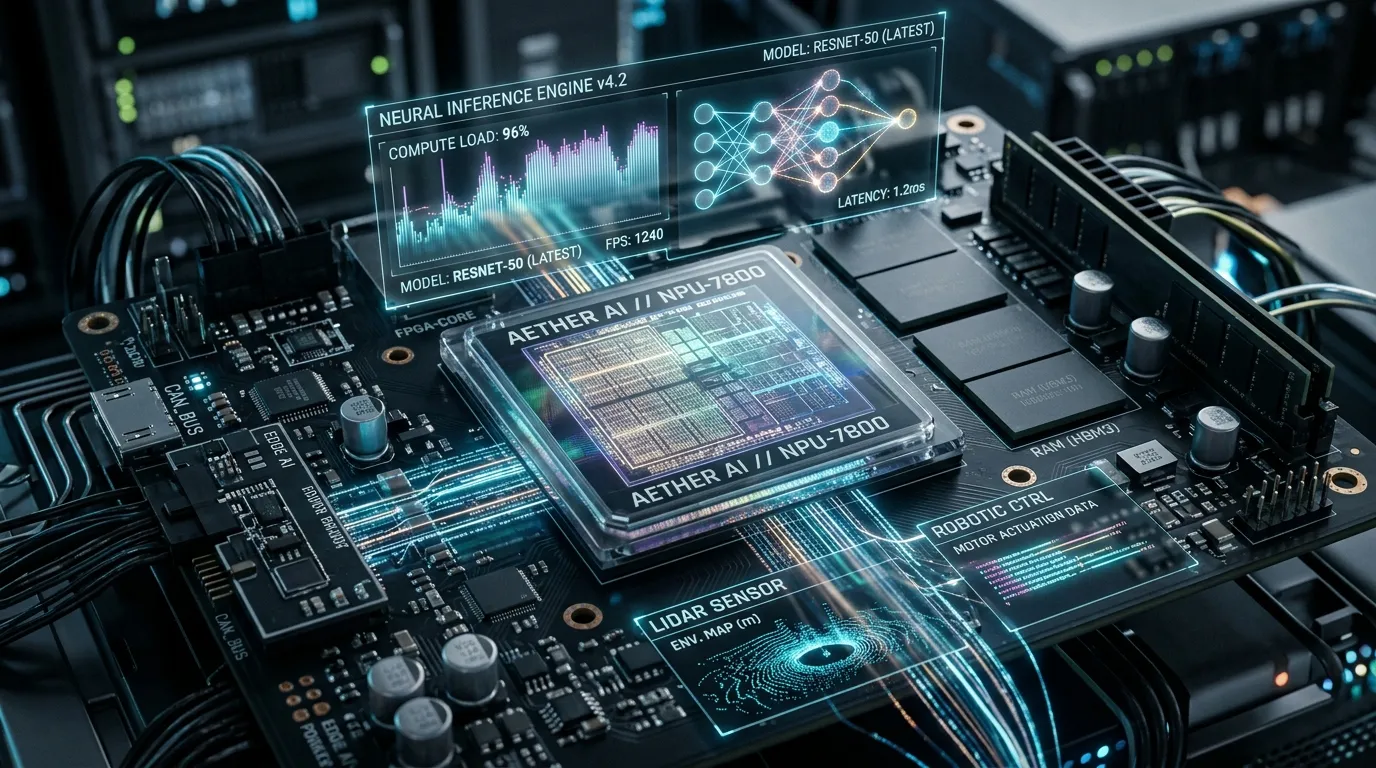

Large-scale model training requires substantial computational resources, often relying on advanced GPUs and distributed data centers.

Companies such as NVIDIA provide high-performance computing hardware that powers large AI training clusters.

Debates surrounding AGI timelines remain speculative. While narrow AI continues to advance rapidly, achieving generalized intelligence comparable to human cognition presents profound scientific challenges.

Regulatory bodies are monitoring AI autonomy developments, particularly in high-risk domains such as defense and healthcare.

Enterprise adoption is likely to proceed cautiously, with autonomous AI systems initially deployed in supervised environments.

Despite uncertainty regarding AGI timelines, incremental advances in autonomous AI capabilities are already influencing enterprise productivity and research acceleration.

Autonomous AI systems represent a defining frontier of deep tech — characterized by high computational demands, advanced mathematical research, and transformative potential.

As AI models become more capable of long-horizon planning and contextual reasoning, the boundary between tool and collaborator continues to blur.

The coming decade will likely see rapid expansion of semi-autonomous AI systems within enterprise environments, even as full AGI remains a research objective.

Balancing innovation with safety and governance will determine the long-term trajectory of autonomous AI development.